Home > Press > New chip ramps up AI computing efficiency

|

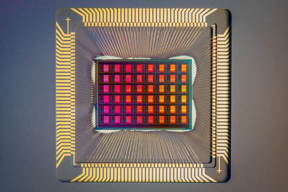

| The NeuRRAM chip is not only twice as energy efficient as state-of-the-art, its also versatile and delivers results that are just as accurate as conventional digital chips.

CREDIT David Baillot/University of California San Diego |

Abstract:

AI-powered edge computing is already pervasive in our lives. Devices like drones, smart wearables, and industrial IoT sensors are equipped with AI-enabled chips so that computing can occur at the edge of the internet, where the data originates. This allows real-time processing and guarantees data privacy.

New chip ramps up AI computing efficiency

Menlo Park, CA | Posted on August 19th, 2022

However, AI functionalities on these tiny edge devices are limited by the energy provided by a battery. Therefore, improving energy efficiency is crucial. In todays AI chips, data processing and data storage happen at separate places a compute unit and a memory unit. The frequent data movement between these units consumes most of the energy during AI processing, so reducing the data movement is the key to addressing the energy issue.

Stanford University engineers have come up with a potential solution: a novel resistive random-access memory (RRAM) chip that does the AI processing within the memory itself, thereby eliminating the separation between the compute and memory units. Their compute-in-memory (CIM) chip, called NeuRRAM, is about the size of a fingertip and does more work with limited battery power than what current chips can do.

Having those calculations done on the chip instead of sending information to and from the cloud could enable faster, more secure, cheaper, and more scalable AI going into the future, and give more people access to AI power, said H.-S Philip Wong, the Willard R. and Inez Kerr Bell Professor in the School of Engineering.

The data movement issue is similar to spending eight hours in commute for a two-hour workday, added Weier Wan, a recent graduate at Stanford leading this project. With our chip, we are showing a technology to tackle this challenge.

They presented NeuRRAM in a recent article in the journal Nature. While compute-in-memory has been around for decades, this chip is the first to actually demonstrate a broad range of AI applications on hardware, rather than through simulation alone.

Putting computing power on the device

To overcome the data movement bottleneck, researchers implemented what is known as compute-in-memory (CIM), a novel chip architecture that performs AI computing directly within memory rather than in separate computing units. The memory technology that NeuRRAM used is resistive random-access memory (RRAM). It is a type of non-volatile memory memory that retains data even once power is off that has emerged in commercial products. RRAM can store large AI models in a small area footprint, and consume very little power, making them perfect for small-size and low-power edge devices.

Even though the concept of CIM chips is well established, and the idea of implementing AI computing in RRAM isnt new, this is one of the first instances to integrate a lot of memory right onto the neural network chip and present all benchmark results through hardware measurements, said Wong, who is a co-senior author of the Nature paper.

The architecture of NeuRRAM allows the chip to perform analog in-memory computation at low power and in a compact-area footprint. It was designed in collaboration with the lab of Gert Cauwenberghs at the University of California, San Diego, who pioneered low-power neuromorphic hardware design. The architecture also enables reconfigurability in dataflow directions, supports various AI workload mapping strategies, and can work with different kinds of AI algorithms all without sacrificing AI computation accuracy.

To show the accuracy of NeuRRAMs AI abilities, the team tested how it functioned on different tasks. They found that its 99% accurate in letter recognition from the MNIST dataset, 85.7% accurate on image classification from the CIFAR-10 dataset, 84.7% accurate on Google speech command recognition and showed a 70% reduction in image-reconstruction error on a Bayesian image recovery task.

Efficiency, versatility, and accuracy are all important aspects for broader adoption of the technology, said Wan. But to realize them all at once is not simple. Co-optimizing the full stack from hardware to software is the key.

Such full-stack co-design is made possible with an international team of researchers with diverse expertise, added Wong.

Fueling edge computations of the future

Right now, NeuRRAM is a physical proof-of-concept but needs more development before its ready to be translated into actual edge devices.

But this combined efficiency, accuracy, and ability to do different tasks showcases the chips potential. Maybe today it is used to do simple AI tasks such as keyword spotting or human detection, but tomorrow it could enable a whole different user experience. Imagine real-time video analytics combined with speech recognition all within a tiny device, said Wan. To realize this, we need to continue improving the design and scaling RRAM to more advanced technology nodes.

This work opens up several avenues of future research on RRAM device engineering, and programming models and neural network design for compute-in-memory, to make this technology scalable and usable by software developers, said Priyanka Raina, assistant professor of electrical engineering and a co-author of the paper.

If successful, RRAM compute-in-memory chips like NeuRRAM have almost unlimited potential. They could be embedded in crop fields to do real-time AI calculations for adjusting irrigation systems to current soil conditions. Or they could turn augmented reality glasses from clunky headsets with limited functionality to something more akin to Tony Starks viewscreen in the Iron Man and Avengers movies (without intergalactic or multiverse threats one can hope).

If mass produced, these chips would be cheap enough, adaptable enough, and low power enough that they could be used to advance technologies already improving our lives, said Wong, like in medical devices that allow home health monitoring.

They can be used to solve global societal challenges as well: AI-enabled sensors would play a role in tracking and addressing climate change. By having these kinds of smart electronics that can be placed almost anywhere, you can monitor the changing world and be part of the solution, Wong said. These chips could be used to solve all kinds of problems from climate change to food security.

Additional co-authors of this work include researchers from University of California San Diego (co-lead), Tsinghua University, University of Notre Dame, and University of Pittsburgh. Former Stanford graduate student Sukru Burc Eryilmaz is also a co-author. Wong is a member of Stanford Bio-X and the Wu Tsai Neurosciences Institute, and an affiliate of the Precourt Institute for Energy. He is also Faculty Director of the Stanford Nanofabrication Facility and the founding faculty co-director of the Stanford SystemX Alliance an industrial affiliate program at Stanford focused on building systems.

This research was funded by the National Science Foundation Expeditions in Computing, SRC JUMP ASCENT Center, Stanford SystemX Alliance, Stanford NMTRI, Beijing Innovation Center for Future Chips, National Natural Science Foundation of China, and the Office of Naval Research.

####

For more information, please click here

Contacts:

Jill Wu

Stanford University

Copyright © Stanford University

If you have a comment, please Contact us.

Issuers of news releases, not 7th Wave, Inc. or Nanotechnology Now, are solely responsible for the accuracy of the content.

Wearable electronics

![]()

Graphene crystals grow better under copper cover April 1st, 2022

News and information

![]()

Scientists unravel Hall effect mystery in search for next generation memory storage devices August 19th, 2022

![]()

Researchers design new inks for 3D-printable wearable bioelectronics: Potential uses include printing electronic tattoos for medical tracking applications August 19th, 2022

Law enforcement/Anti-Counterfeiting/Security/Loss prevention

![]()

How randomly moving electrons can improve cyber security May 27th, 2022

Internet-of-Things

![]()

Lightening up the nanoscale long-wavelength optoelectronics May 13th, 2022

Govt.-Legislation/Regulation/Funding/Policy

![]()

Rice team eyes cells for sophisticated data storage: National Science Foundation backs effort to turn living cells into equivalent of computer RAM August 19th, 2022

![]()

UNC Charlotte-led team invents new anticoagulant platform, offering hope for advances for heart surgery, dialysis, other procedures July 15th, 2022

![]()

Crystal phase engineering offers glimpse of future potential, researchers say July 15th, 2022

Possible Futures

![]()

Rice team eyes cells for sophisticated data storage: National Science Foundation backs effort to turn living cells into equivalent of computer RAM August 19th, 2022

![]()

U-M researchers untangle the physics of high-temperature superconductors August 19th, 2022

Chip Technology

![]()

Scientists unravel Hall effect mystery in search for next generation memory storage devices August 19th, 2022

![]()

Researchers design new inks for 3D-printable wearable bioelectronics: Potential uses include printing electronic tattoos for medical tracking applications August 19th, 2022

Discoveries

![]()

Scientists unravel Hall effect mystery in search for next generation memory storage devices August 19th, 2022

![]()

Researchers design new inks for 3D-printable wearable bioelectronics: Potential uses include printing electronic tattoos for medical tracking applications August 19th, 2022

![]()

Visualizing nanoscale structures in real time: Open-source software enables researchers to see materials in 3D while they’re still on the electron microscope August 19th, 2022

Announcements

![]()

Scientists unravel Hall effect mystery in search for next generation memory storage devices August 19th, 2022

![]()

Researchers design new inks for 3D-printable wearable bioelectronics: Potential uses include printing electronic tattoos for medical tracking applications August 19th, 2022

![]()

Visualizing nanoscale structures in real time: Open-source software enables researchers to see materials in 3D while they’re still on the electron microscope August 19th, 2022

Military

![]()

Strain-sensing smart skin ready to deploy: Nanotube-embedded coating detects threats from wear and tear in large structures July 15th, 2022

![]()

Boron nitride nanotube fibers get real: Rice lab creates first heat-tolerant, stable fibers from wet-spinning process June 24th, 2022

![]()

Bumps could smooth quantum investigations: Rice University models show unique properties of 2D materials stressed by contoured substrates June 10th, 2022

Artificial Intelligence

![]()

Artificial Intelligence Centered Cancer Nanomedicine: Diagnostics, Therapeutics and Bioethics June 3rd, 2022

![]()

Nanomagnetic computing can provide low-energy AI, researchers show May 6th, 2022

![]()

Artificial neurons go quantum with photonic circuits: Quantum memristor as missing link between artificial intelligence and quantum computing March 25th, 2022

Grants/Sponsored Research/Awards/Scholarships/Gifts/Contests/Honors/Records

![]()

UNC Charlotte-led team invents new anticoagulant platform, offering hope for advances for heart surgery, dialysis, other procedures July 15th, 2022

![]()

Photoinduced large polaron transport and dynamics in organic-inorganic hybrid lead halide perovskite with terahertz probes July 8th, 2022

![]()

Luisier wins SNSF Advanced Grant to develop simulation tools for nanoscale devices July 8th, 2022

![]()

Boron nitride nanotube fibers get real: Rice lab creates first heat-tolerant, stable fibers from wet-spinning process June 24th, 2022